Decimal multiplier of micro prefix. “Nanotechnology”, “nanoscience” and “nanoobjects”: what does “nano” mean?

Length and distance converter Mass converter Converter of volume measures of bulk products and food products Area converter Converter of volume and units of measurement in culinary recipes Temperature converter Converter of pressure, mechanical stress, Young's modulus Converter of energy and work Converter of power Converter of force Converter of time Linear speed converter Flat angle Converter thermal efficiency and fuel efficiency Converter of numbers in various number systems Converter of units of measurement of quantity of information Currency rates Women's clothing and shoe sizes Men's clothing and shoe sizes Angular velocity and rotation frequency converter Acceleration converter Angular acceleration converter Density converter Specific volume converter Moment of inertia converter Moment of force converter Torque converter Specific heat of combustion converter (by mass) Energy density and specific heat of combustion converter (by volume) Temperature difference converter Coefficient of thermal expansion converter Thermal resistance converter Thermal conductivity converter Specific heat capacity converter Energy exposure and thermal radiation power converter Heat flux density converter Heat transfer coefficient converter Volume flow rate converter Mass flow rate converter Molar flow rate converter Mass flow density converter Molar concentration converter Mass concentration in solution converter Dynamic (absolute) viscosity converter Kinematic viscosity converter Surface tension converter Vapor permeability converter Vapor permeability and vapor transfer rate converter Sound level converter Microphone sensitivity converter Sound Pressure Level (SPL) Converter Sound Pressure Level Converter with Selectable Reference Pressure Luminance Converter Luminous Intensity Converter Illuminance Converter Computer Graphics Resolution Converter Frequency and Wavelength Converter Diopter Power and Focal Length Diopter Power and Lens Magnification (×) Electric charge converter Linear charge density converter Surface charge density converter Volume charge density converter Electric current converter Linear current density converter Surface current density converter Electric field strength converter Electrostatic potential and voltage converter Electrical resistance converter Electrical resistivity converter Electrical conductivity converter Electrical conductivity converter Electrical capacitance Inductance converter American wire gauge converter Levels in dBm (dBm or dBm), dBV (dBV), watts, etc. units Magnetomotive force converter Magnetic field strength converter Magnetic flux converter Magnetic induction converter Radiation. Ionizing radiation absorbed dose rate converter Radioactivity. Radioactive decay converter Radiation. Exposure dose converter Radiation. Absorbed dose converter Decimal prefix converter Data transfer Typography and image processing unit converter Timber volume unit converter Calculation of molar mass Periodic table of chemical elements by D. I. Mendeleev

1 mega [M] = 0.001 giga [G]

Initial value

Converted value

without prefix yotta zetta exa peta tera giga mega kilo hecto deca deci santi milli micro nano pico femto atto zepto yocto

Mass concentration in solution

Metric system and International System of Units (SI)

Introduction

In this article we will talk about the metric system and its history. We will see how and why it began and how it gradually evolved into what we have today. We will also look at the SI system, which was developed from the metric system of measures.

For our ancestors, who lived in a world full of dangers, the ability to measure various quantities in their natural habitat made it possible to get closer to understanding the essence of natural phenomena, knowledge of their environment and the ability to somehow influence what surrounded them. That is why people tried to invent and improve various measurement systems. At the dawn of human development, having a measurement system was no less important than it is now. It was necessary to carry out various measurements when building housing, sewing clothes of different sizes, preparing food and, of course, trade and exchange could not do without measurement! Many believe that the creation and adoption of the International System of SI Units is the most serious achievement not only of science and technology, but also of human development in general.

Early measurement systems

In early measurement and number systems, people used traditional objects to measure and compare. For example, it is believed that the decimal system appeared due to the fact that we have ten fingers and toes. Our hands are always with us - that's why since ancient times people have used (and still use) fingers for counting. Still, we haven't always used the base 10 system for counting, and the metric system is a relatively new invention. Each region developed its own systems of units and, although these systems have much in common, most systems are still so different that converting units of measurement from one system to another has always been a problem. This problem became more and more serious as trade between different peoples developed.

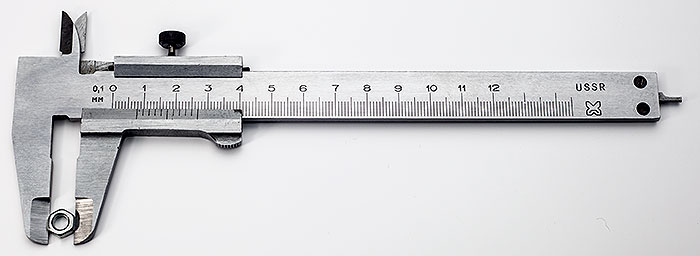

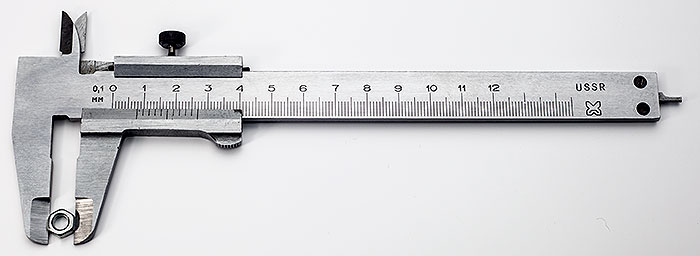

The accuracy of the first systems of weights and measures directly depended on the size of the objects that surrounded the people who developed these systems. It is clear that the measurements were inaccurate, since the “measuring devices” did not have exact dimensions. For example, parts of the body were commonly used as a measure of length; mass and volume were measured using the volume and mass of seeds and other small objects whose dimensions were more or less the same. Below we will take a closer look at such units.

Length measures

In ancient Egypt, length was first measured simply elbows, and later with royal elbows. The length of the elbow was determined as the distance from the bend of the elbow to the end of the extended middle finger. Thus, the royal cubit was defined as the cubit of the reigning pharaoh. A model cubit was created and made available to the general public so that everyone could make their own length measures. This, of course, was an arbitrary unit that changed when a new reigning person took the throne. Ancient Babylon used a similar system, but with minor differences.

The elbow was divided into smaller units: palm, hand, zerets(ft), and you(finger), which were represented by the widths of the palm, hand (with thumb), foot and finger, respectively. At the same time, they decided to agree on how many fingers there were in the palm (4), in the hand (5) and in the elbow (28 in Egypt and 30 in Babylon). It was more convenient and more accurate than measuring ratios every time.

Measures of mass and weight

Weight measures were also based on the parameters of various objects. Seeds, grains, beans and similar items were used as weight measures. A classic example of a unit of mass that is still used today is carat. Nowadays, the weight of precious stones and pearls is measured in carats, and once upon a time the weight of carob seeds, otherwise called carob, was determined as a carat. The tree is cultivated in the Mediterranean, and its seeds are distinguished by their constant mass, so they were convenient to use as a measure of weight and mass. Different places used different seeds as small units of weight, and larger units were usually multiples of smaller units. Archaeologists often find similar large weights, usually made of stone. They consisted of 60, 100 and other numbers of small units. Since there was no uniform standard for the number of small units, as well as for their weight, this led to conflicts when sellers and buyers who lived in different places met.

Volume measures

Initially, volume was also measured using small objects. For example, the volume of a pot or jug was determined by filling it to the top with small objects relative to the standard volume - like seeds. However, the lack of standardization led to the same problems when measuring volume as when measuring mass.

Evolution of various systems of measures

The ancient Greek system of measures was based on the ancient Egyptian and Babylonian ones, and the Romans created their system based on the ancient Greek one. Then, through fire and sword and, of course, through trade, these systems spread throughout Europe. It should be noted that here we are talking only about the most common systems. But there were many other systems of weights and measures, because exchange and trade were necessary for absolutely everyone. If there was no written language in the area or it was not customary to record the results of the exchange, then we can only guess at how these people measured volume and weight.

There are many regional variations in systems of measures and weights. This is due to their independent development and the influence of other systems on them as a result of trade and conquest. There were different systems not only in different countries, but often within the same country, where each trading city had its own, because local rulers did not want unification in order to maintain their power. As travel, trade, industry, and science developed, many countries sought to unify systems of weights and measures, at least within their own countries.

Already in the 13th century, and possibly earlier, scientists and philosophers discussed the creation of a unified measurement system. However, it was only after the French Revolution and the subsequent colonization of various regions of the world by France and other European countries, which already had their own systems of weights and measures, that a new system was developed, adopted in most countries of the world. This new system was decimal metric system. It was based on the base 10, that is, for any physical quantity there was one basic unit, and all other units could be formed in a standard way using decimal prefixes. Each such fractional or multiple unit could be divided into ten smaller units, and these smaller units could in turn be divided into 10 even smaller units, and so on.

As we know, most early measurement systems were not based on base 10. The convenience of a system with base 10 is that the number system we are familiar with has the same base, which allows us to quickly and conveniently, using simple and familiar rules, convert from smaller units to big and vice versa. Many scientists believe that the choice of ten as the base of the number system is arbitrary and is connected only with the fact that we have ten fingers and if we had a different number of fingers, then we would probably use a different number system.

Metric system

In the early days of the metric system, man-made prototypes were used as measures of length and weight, as in previous systems. The metric system has evolved from a system based on material standards and dependence on their accuracy to a system based on natural phenomena and fundamental physical constants. For example, the time unit second was initially defined as a fraction of the tropical year 1900. The disadvantage of this definition was the impossibility of experimental verification of this constant in subsequent years. Therefore, the second was redefined as a certain number of periods of radiation corresponding to the transition between two hyperfine levels of the ground state of the radioactive atom of cesium-133, which is at rest at 0 K. The unit of distance, the meter, was related to the wavelength of the line of the radiation spectrum of the isotope krypton-86, but later The meter was redefined as the distance that light travels in a vacuum in a period of time equal to 1/299,792,458 of a second.

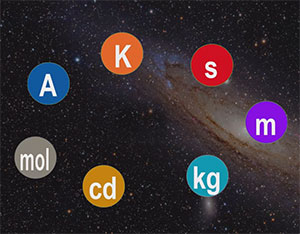

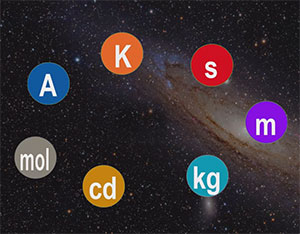

The International System of Units (SI) was created based on the metric system. It should be noted that traditionally the metric system includes units of mass, length and time, but in the SI system the number of base units has been expanded to seven. We will discuss them below.

International System of Units (SI)

The International System of Units (SI) has seven basic units for measuring basic quantities (mass, time, length, luminous intensity, amount of matter, electric current, thermodynamic temperature). This kilogram(kg) to measure mass, second(c) to measure time, meter(m) to measure distance, candela(cd) to measure luminous intensity, mole(abbreviation mole) to measure the amount of a substance, ampere(A) to measure electric current, and kelvin(K) to measure temperature.

Currently, only the kilogram still has a man-made standard, while the remaining units are based on universal physical constants or natural phenomena. This is convenient because the physical constants or natural phenomena on which the units of measurement are based can be easily verified at any time; In addition, there is no danger of loss or damage to standards. There is also no need to create copies of standards to ensure their availability in different parts of the world. This eliminates errors associated with the accuracy of making copies of physical objects, and thus provides greater accuracy.

Decimal prefixes

To form multiples and submultiples that differ from the base units of the SI system by a certain integer number of times, which is a power of ten, it uses prefixes attached to the name of the base unit. The following is a list of all currently used prefixes and the decimal factors they represent:

| Console | Symbol | Numerical value; Commas here separate groups of digits, and the decimal separator is a period. | Exponential notation |

|---|---|---|---|

| yotta | Y | 1 000 000 000 000 000 000 000 000 | 10 24 |

| zetta | Z | 1 000 000 000 000 000 000 000 | 10 21 |

| exa | E | 1 000 000 000 000 000 000 | 10 18 |

| peta | P | 1 000 000 000 000 000 | 10 15 |

| tera | T | 1 000 000 000 000 | 10 12 |

| giga | G | 1 000 000 000 | 10 9 |

| mega | M | 1 000 000 | 10 6 |

| kilo | To | 1 000 | 10 3 |

| hecto | G | 100 | 10 2 |

| soundboard | Yes | 10 | 10 1 |

| without prefix | 1 | 10 0 | |

| deci | d | 0,1 | 10 -1 |

| centi | With | 0,01 | 10 -2 |

| Milli | m | 0,001 | 10 -3 |

| micro | mk | 0,000001 | 10 -6 |

| nano | n | 0,000000001 | 10 -9 |

| pico | P | 0,000000000001 | 10 -12 |

| femto | f | 0,000000000000001 | 10 -15 |

| atto | A | 0,000000000000000001 | 10 -18 |

| zepto | h | 0,000000000000000000001 | 10 -21 |

| yocto | And | 0,000000000000000000000001 | 10 -24 |

For example, 5 gigameters is equal to 5,000,000,000 meters, while 3 microcandelas is equal to 0.000003 candelas. It is interesting to note that, despite the presence of a prefix in the unit kilogram, it is the base unit of the SI. Therefore, the above prefixes are applied with the gram as if it were a base unit.

At the time of writing this article, there are only three countries that have not adopted the SI system: the United States, Liberia and Myanmar. In Canada and the UK, traditional units are still widely used, even though the SI system is the official unit system in these countries. It’s enough to go into a store and see price tags per pound of goods (it turns out cheaper!), or try to buy building materials measured in meters and kilograms. Will not work! Not to mention the packaging of goods, where everything is labeled in grams, kilograms and liters, but not in whole numbers, but converted from pounds, ounces, pints and quarts. Milk space in refrigerators is also calculated per half-gallon or gallon, not per liter milk carton.

Do you find it difficult to translate units of measurement from one language to another? Colleagues are ready to help you. Post a question in TCTerms and within a few minutes you will receive an answer.

Calculations for converting units in the converter " Decimal prefix converter" are performed using unitconversion.org functions.

Nano, Fatos Fatos Thanas Nano Date of birth: September 16, 1952 Place of birth: Tirana Citizenship: Albania ... Wikipedia

May mean: Fatos Nano Albanian politician, former Prime Minister of Albania. “nano” (from other Greek νᾶνος, nanos gnome, dwarf) one of the SI prefixes (10 9 one billionth). Designations: Russian n, international n. Example: ... ... Wikipedia

Nano abacus is a nano-sized abacus developed by IBM scientists in Zurich (Switzerland) in 1996. Stable rows of ten molecules act like counting spokes. The “knuckles” are made of fullerene and are controlled by a scanning needle... ... Wikipedia

NANO... [Greek nanos dwarf] The first part of compound words. Specialist. Introduces a value: equal to one billionth of the unit indicated in the second part of the word (for the name of units of physical quantities). Nanosecond, nanometer. * * * nano... (from Greek nános ... ... encyclopedic Dictionary

Nano... (gr. nannos dwarf) the first component of the names of physical units. quantities that serve to form the names of submultiple units equal to the billionth (109) share of the original units, for example. 1 nanometer = 10 9 m; abbreviation designations: n, n. New… …

NANO... (from the Greek nanos dwarf) a prefix to form the name of submultiple units equal to one billionth of the original units. Designations: n, n. Example: 1 nm = 10 9 m... Big Encyclopedic Dictionary

- (from the Greek nanos dwarf), a prefix to the name of a unit of physical quantity to form the name of a submultiple unit equal to 10 9 from the original unit. Designations: n, n. Example: 1 nm (nanometer) = 10 9 m. Physical encyclopedic dictionary. M.:... ... Physical encyclopedia

- [gr. nanos – dwarf]. Prefix for forming the name of submultiple units equal to one billionth of the original units. For example, 1 nm 10 9 m. Large dictionary of foreign words. Publishing house "IDDK", 2007 ... Dictionary of foreign words of the Russian language

nano- nano: the first part of complex words, written together... Russian spelling dictionary

nano- 10 Sep [A.S. Goldberg. English-Russian energy dictionary. 2006] Energy topics in general EN nanoN ... Technical Translator's Guide

Books

- Nano-CMOS circuits and design at the physical level, Wong B.P.. This systematic guide for developers of modern ultra-large-scale integrated circuits, presented in one book, contains up-to-date information on the features of modern technologies...

- Nano-felting. Fundamentals of Craftsmanship, Aniko Arvai, Michal Vetro. We present to your attention a collection of ideas for creating amazing and original accessories using the nano-felting technique! This technique is different in that you are not just making felted…

(SI), but their use is not limited to SI, and many date back to the advent of the metric system (1790s).

Requirements for units of quantities used in the Russian Federation are established by Federal Law of June 26, 2008 N 102-FZ “On ensuring the uniformity of measurements”. The law in particular determines that the names of units of quantities allowed for use in the Russian Federation, their designations, writing rules, as well as the rules for their use are established by the Government of the Russian Federation. In development of this norm, on October 31, 2009, the Government of the Russian Federation adopted the “Regulations on units of quantities allowed for use in the Russian Federation”, Appendix No. 5 to which contains decimal factors, prefixes and designations of prefixes for the formation of multiple and submultiple units of quantities. The same appendix provides rules regarding prefixes and their designations. In addition, the use of SI in Russia is regulated by the GOST standard 8.417-2002.

With the exception of specially specified cases, the “Regulations on units of quantities allowed for use in the Russian Federation” allows the use of both Russian and international designations of units, but prohibits, however, their simultaneous use.

Prefixes for multiples

Multiples of units- units that are an integer number of times (10 to some degree) greater than the basic unit of measurement of some physical quantity. The International System of Units (SI) recommends the following decimal prefixes to represent multiple units:

| Decimal multiplier | Console | Designation | Example | ||

|---|---|---|---|---|---|

| Russian | international | Russian | international | ||

| 10 1 | soundboard | deca | Yes | da | dal - deciliter |

| 10 2 | hecto | hecto | G | h | hPa - hectopascal |

| 10 3 | kilo | kilo | To | k | kN - kilonewton |

| 10 6 | mega | mega | M | M | MPa - megapascal |

| 10 9 | giga | giga | G | G | GHz - gigahertz |

| 10 12 | tera | tera | T | T | TV - teravolt |

| 10 15 | peta | peta | P | P | Pflops - petaflops |

| 10 18 | exa | exa | E | E | Em - exameter |

| 10 21 | zetta | zetta | Z | Z | ZeV - zettaelectronvolt |

| 10 24 | iotta | yotta | AND | Y | Ig - iottagram |

Application of decimal prefixes to units of information quantity

The Regulations on units of quantities allowed for use in the Russian Federation establish that the name and designation of the unit of information quantity “byte” (1 byte = 8 bits) are used with the binary prefixes “Kilo”, “Mega”, “Giga”, which correspond multipliers 2 10, 2 20 and 2 30 (1 KB = 1024 bytes, 1 MB = 1024 KB, 1 GB = 1024 MB).

The same Regulations also allow the use of an international designation for a unit of information with the prefixes “K” “M” “G” (KB, MB, GB, Kbyte, Mbyte, Gbyte).

In programming and the computer industry, the same prefixes "kilo", "mega", "giga", "tera", etc., when applied to powers of two (e.g. bytes), can mean both a multiple of 1000 and 1024 = 2 10. Which system is used is sometimes clear from the context (for example, in relation to the amount of RAM, a factor of 1024 is used, and in relation to the total volume of disk memory of hard drives, a factor of 1000 is used).

| 1 kilobyte | = 1024 1 | = 2 10 | = 1024 bytes |

| 1 megabyte | = 1024 2 | = 2 20 | = 1,048,576 bytes |

| 1 gigabyte | = 1024 3 | = 2 30 | = 1,073,741,824 bytes |

| 1 terabyte | = 1024 4 | = 2 40 | = 1,099,511,627,776 bytes |

| 1 petabyte | = 1024 5 | = 2 50 | = 1,125,899,906,842,624 bytes |

| 1 exabyte | = 1024 6 | = 2 60 | = 1,152,921,504,606,846,976 bytes |

| 1 zettabyte | = 1024 7 | = 2 70 | = 1,180,591,620,717,411,303,424 bytes |

| 1 iottabyte | = 1024 8 | = 2 80 | = 1,208,925,819,614,629,174,706,176 bytes |

To avoid confusion, in April 1999 the International Electrotechnical Commission introduced a new standard for naming binary numbers (see Binary prefixes).

Prefixes for submultiple units

Submultiple units constitute a certain proportion (part) of the established unit of measurement of a certain value. The International System of Units (SI) recommends the following prefixes for denoting submultiple units:

| Decimal multiplier | Console | Designation | Example | ||

|---|---|---|---|---|---|

| Russian | international | Russian | international | ||

| 10 −1 | deci | deci | d | d | dm - decimeter |

| 10 −2 | centi | centi | With | c | cm - centimeter |

| 10 −3 | Milli | milli | m | m | mH - millinewton |

| 10 −6 | micro | micro | mk | µm - micrometer | |

| 10 −9 | nano | nano | n | n | nm - nanometer |

| 10 −12 | pico | pico | P | p | pF - picofarad |

| 10 −15 | femto | femto | f | f | fl - femtoliter |

| 10 −18 | atto | atto | A | a | ac - attosecond |

| 10 −21 | zepto | zepto | h | z | zkl - zeptocoulon |

| 10 −24 | iocto | yocto | And | y | ig - ioctogram |

Origin of consoles

Prefixes were introduced into SI gradually. In 1960, the XI General Conference on Weights and Measures (GCPM) adopted a number of prefix names and corresponding symbols for factors ranging from 10 −12 to 10 12. Prefixes for 10 −15 and 10 −18 were added by the XII CGPM in 1964, and for 10 15 and 10 18 by the XV CGPM in 1975. The most recent addition to the list of prefixes took place at the XIX CGPM in 1991, when they were adopted prefixes for factors 10 −24, 10 −21, 10 21 and 10 24.

Most prefixes are derived from words in ancient Greek. Deca - from ancient Greek. δέκα “ten”, hecto- from ancient Greek. ἑκατόν “one hundred”, kilo- from ancient Greek. χίλιοι “thousand”, mega- from ancient Greek. μέγας , that is, “big”, giga- - this is ancient Greek. γίγας - “giant”, and tera - from ancient Greek. τέρας , which means "monster". Peta- (ancient Greek. πέντε ) and exa- (ancient Greek. ἕξ ) correspond to five and six digits of a thousand and are translated, respectively, as “five” and “six”. Lobed micro- (from ancient Greek. μικρός ) and nano- (from ancient Greek. νᾶνος ) are translated as “small” and “dwarf”. From one word in ancient Greek. ὀκτώ (okto), meaning “eight”, the prefixes iotta (1000 8) and iocto (1/1000 8) are formed.

The prefix milli, which goes back to Lat., is also translated as “thousand”. mille. Latin roots also have the prefixes centi - from centum(“one hundred”) and deci - from decimus(“tenth”), zetta - from septem("seven"). Zepto (“seven”) comes from the Latin. septem or from fr. sept.

The prefix atto is derived from dates. atten (“eighteen”). Femto dates back to dates. and norwegian femten or to other Scand. fimmtān and means "fifteen".

The name of the prefix “pico” comes from Italian. piccolo - small

Nature is continuous, and any definition requires the establishment of some boundaries. Therefore, formulating definitions is a rather thankless task. Nevertheless, this must be done, since a clear definition allows one to separate one phenomenon from another, identify significant differences between them and thus gain a deeper understanding of the phenomena themselves. Therefore, the purpose of this essay is to try to understand the meaning of today’s fashionable terms with the prefix “nano” (from the Greek word for “dwarf”) - “nanoscience”, “nanotechnology”, “nanoobject”, “nanomaterial”.

Despite the fact that these issues have been repeatedly discussed with varying degrees of depth in specialized and popular science literature, analysis of the literature and personal experience show that there is still no clear understanding of the problem itself in broad scientific circles, not to mention non-scientific ones. , and definitions. That is why we will try to define all the terms listed above, focusing the reader’s attention on the meaning of the basic concept of “nanoobject”. We invite the reader to jointly think about whether there is something that fundamentally distinguishes nanoobjects from their larger and smaller “brothers” that “inhabit” the world around us. Moreover, we invite him to take part in a series of thought experiments on the design of nanostructures and their synthesis. We will also try to demonstrate that it is in the nanoscale range that the nature of physical and chemical interactions changes, and this happens precisely in the same area of the size scale where the border between living and inanimate nature lies.

But first, where did all this come from, why was the prefix “nano” introduced, what is decisive when classifying materials as nanostructures, why are nanoscience and nanotechnology separated into separate areas, what in this separation refers (and does it refer) to truly scientific fundamentals?

What is “nano” and where did it all start?

This is a prefix that shows that the original value must be reduced by a billion times, that is, divided by one followed by nine zeros - 1,000,000,000. For example, 1 nanometer is a billionth of a meter (1 nm = 10 –9 m) . To get an idea of how small 1 nm is, let's perform the following thought experiment (Figure 1). If we reduce the diameter of our planet (12,750 km = 12.75 × 10 6 m ≈ 10 7 m) by 100 million (10 8) times, we get about 10 –1 m. This is approximately the size of a soccer ball (standard the diameter of a soccer ball is 22 cm, but on our scale this difference is insignificant; for us 2.2 × 10 –1 m ≈ 10 –1 m). Now let’s reduce the diameter of the soccer ball by the same 100 million (10 8) times, and only now we get the size of the nanoparticle equal to 1 nm (approximately the diameter of the carbon molecule of fullerene C 60, similar in shape to a soccer ball - see Fig. 1) .

It is noteworthy that the prefix “nano” has been used in scientific literature for quite a long time, but to designate objects that are not nanoscale. In particular, for objects whose size is billions of times greater than 1 nm - in dinosaur terminology. Nanotyrannosaurs ( nanotyrranus) and nanosaurs ( nanosaurus) are called dwarf dinosaurs, whose dimensions are 5 and 1.3 m, respectively. But they are really “dwarfs” compared to other dinosaurs, whose dimensions exceed 10 m (up to 50 m), and their weight can reach 30–40 tons or more. This example emphasizes that the prefix “nano” itself does not have a physical meaning, but only indicates scale.

But now, with the help of this prefix, they designate a new era in the development of technology, sometimes called the fourth industrial revolution - the era of nanotechnology.

It is often believed that the beginning of the nanotechnology era was laid in 1959 by Richard Feynman in his lecture " There's Plenty of Room at the Bottom"("There's a lot of room down there") The main postulate of this lecture was that from the point of view of the fundamental laws of physics, the author does not see any obstacles to working at the molecular and atomic levels, manipulating individual atoms or molecules. Feynman said that with With the help of certain devices, you can make even smaller devices, which in turn can make even smaller devices, and so on down to the atomic level, i.e., with the appropriate technology, individual atoms can be manipulated.

To be fair, however, it should be noted that Feynman was not the first to come up with this. In particular, the idea of creating manipulators that gradually decrease in size was expressed back in 1931 by the writer Boris Zhitkov in his science fiction story “Microhands”. We cannot resist giving short quotes from this story to let the reader appreciate the writer’s insight for himself:

“I racked my brains for a long time and this is what I came to: I will make small hands, an exact copy of mine - even if they are at least twenty, thirty times smaller, but they will have flexible fingers, like mine, they will clench into a fist, straighten, become in the same positions as my living hands. And I made them...

But a thought suddenly struck me: I can make micro hands for my small hands. I can make the same gloves for them as I made for my living hands, using the same system, connect them with handles ten times smaller than my micro-hands, and then... I will have real micro-hands, they will be two hundred times smaller than mine movements. With these hands I will burst into such small things of life that have only been seen, but where no one has yet disposed of their hands. And I got to work...

I wanted to make true micro hands, ones that I could use to grab the particles of matter that matter is made of, those unimaginably small particles that are only visible through an ultramicroscope. I wanted to get into that area where the human mind loses all idea of size - it seems that there are no sizes, everything is so unimaginably small.”

But it's not just about literary predictions. What are now called nanoobjects, nanotechnologies, if you like, people have long used in their lives. One of the most striking examples (literally and figuratively) is multi-colored glass. For example, created in the 4th century AD. e. The Lycurgus Cup, kept in the British Museum, when illuminated from the outside is green, but when illuminated from the inside it is purple-red. Recent electron microscopy studies have shown that this unusual effect is due to the presence of nano-sized particles of gold and silver in the glass. Therefore, we can safely say that the Lycurgus Cup is made of nanocomposite material.

As it turns out now, in the Middle Ages, metallic nanodust was often added to glass to make stained glass. Variations in glass color depend on differences in the particles added - the nature of the metal used and the size of its particles. It was recently discovered that these glasses also have bactericidal properties, that is, they not only provide a beautiful play of light in the room, but also disinfect the environment.

If we consider the history of the development of science in historical terms, then we can highlight, on the one hand, a general vector - the penetration of natural sciences “deep” into matter. Movement along this vector is determined by the development of surveillance means. At first, people studied the ordinary world, which did not require special instruments to observe. With observations at this level, the foundations of biology were laid (classification of the living world, C. Linnaeus, etc.), and the theory of evolution was created (C. Darwin, 1859). When the telescope appeared, people were able to make astronomical observations (G. Galileo, 1609). The result of this was the law of universal gravitation and classical mechanics (I. Newton, 1642–1727). When Leeuwenhoek's microscope appeared (1674), people penetrated into the microworld (size interval 1 mm - 0.1 mm). At first it was only the contemplation of small organisms invisible to the eye. Only at the end of the 19th century L. Pasteur was the first to clarify the nature and functions of microorganisms. Around the same time (late 19th - early 20th centuries) a revolution took place in physics. Scientists began to penetrate inside the atom and study its structure. Again, this was due to the advent of new methods and tools, which began to use the smallest particles of matter. In 1909, using alpha particles (helium nuclei with a size of about 10–13 m), Rutherford managed to “see” the nucleus of a gold atom. The Bohr-Rutherford planetary model of the atom, created on the basis of these experiments, provides a visual image of the enormity of the “free” space in the atom, which is quite comparable to the cosmic emptiness of the Solar system. It was voids of such orders that Feynman had in mind in his lecture. Using the same α-particles, in 1919 Rutherford carried out the first nuclear reaction to convert nitrogen into oxygen. This is how physicists entered the pico- and femto-size intervals, and understanding the structure of matter at the atomic and subatomic levels led in the first half of the last century to the creation of quantum mechanics.

World of Lost Values

Historically, it so happened that on the dimensional scale (Fig. 2) almost all dimensional areas of research were “covered”, except for the area of nanosizes. However, the world is not without visionary people. At the beginning of the 20th century, W. Ostwald published the book “The World of Bypassed Quantities,” which dealt with a new field of chemistry at that time - colloidal chemistry, which dealt specifically with nanometer-sized particles (although this term was not yet used at that time). Already in this book, he noted that the crushing of matter at some point leads to new properties, that the properties of the entire material depend on the size of the particle.

At the beginning of the twentieth century, they were not yet able to “see” particles of this size, since they lay below the resolution limits of a light microscope. Therefore, it is no coincidence that one of the initial milestones in the emergence of nanotechnology is considered to be the invention of the electron microscope by M. Knoll and E. Ruska in 1931. Only after this was humanity able to “see” objects of submicron and nanometer sizes. And then everything falls into place - the main criterion by which humanity accepts (or does not accept) any new facts and phenomena is expressed in the words of Thomas the unbeliever: “Until I see, I will not believe.”

The next step was taken in 1981 - G. Binnig and G. Rohrer created a scanning tunneling microscope, which made it possible not only to obtain images of individual atoms, but also to manipulate them. That is, the technology that R. Feynman spoke about in his lecture was created. That's when the era of nanotechnology began.

Let us note that here again we are dealing with the same story. Again, because it is generally common for humanity not to pay attention to what is, at least a little, ahead of its time. So, using the example of nanotechnology, it turns out that nothing new was discovered, they simply began to better understand what was happening around, what even in ancient times people were already doing, albeit unconsciously, or rather, consciously (they knew what they wanted to get), but not understanding physics and chemistry phenomena. Another question is that the presence of technology does not yet mean understanding the essence of the process. They knew how to cook steel a long time ago, but understanding of the physical and chemical foundations of steelmaking came much later. Here you can remember that the secret of Damascus steel has not yet been discovered. Here we have a different hypostasis - we know what we need to get, but we don’t know how. So the relationship between science and technology is not always simple.

Who was the first to study nanomaterials in their modern sense? In 1981, the American scientist G. Gleiter first used the definition of “nanocrystalline”. He formulated the concept of creating nanomaterials and developed it in a series of works in 1981–1986, introducing the terms “nanocrystalline”, “nanostructured”, “nanophase” and “nanocomposite” materials. The main emphasis of these works was on the critical role of multiple interfaces in nanomaterials as a basis for changing the properties of solids.

One of the most important events in the history of nanotechnology and the development of the ideology of nanoparticles was also the discovery in the mid-80s - early 90s of the 20th century of carbon nanostructures - fullerenes and carbon nanotubes, as well as the discovery in the 21st century of a method for producing graphene.

But let's get back to the definitions.

First definitions: everything is very simple

At first everything was very simple. In 2000, US President B. Clinton signed the document “ National Nanotechnology Initiative"("National Nanotechnology Initiative"), which provides the following definition: nanotechnology includes the creation of technologies and research at the atomic, molecular and macromolecular levels within approximately from 1 to 100 nm to understand the fundamental principles of phenomena and properties of materials at the nanoscale level, as well as the creation and use of structures, equipment and systems that have new properties and functions determined by their size.

In 2003, the UK government appealed to Royal Society and Royal Academy of Engineering with a request to express their opinion on the need to develop nanotechnology, assess the advantages and problems that their development may cause. Such a report entitled “ Nanoscience and nanotechnologies: opportunities and uncertainties" appeared in July 2004, and in it, as far as we know, for the first time separate definitions of nanoscience and nanotechnology were given:

Nanoscience is the study of phenomena and objects at the atomic, molecular and macromolecular levels, the characteristics of which differ significantly from the properties of their macro-analogs.

Nanotechnology is the design, characterization, production and application of structures, devices and systems whose properties are determined by their shape and size at the nanometer level.

Thus, under the term "nanotechnology" refers to a set of technological techniques that makes it possible to create nano-objects and/or manipulate them. All that remains is to define nanoobjects. But it turns out that this is not so simple, so most of the article is devoted to this definition.

To begin with, here is the formal definition most widely used today:

Nanoobjects (nanoparticles) are objects (particles) with a characteristic size of 1–100 nanometers in at least one dimension.

Everything seems to be good and clear, but it’s not clear why such a strict definition of the lower and upper limits of 1 and 100 nm was given? It seems that this was chosen voluntaristically, the assignment of the upper limit is especially suspicious. Why not 70 or 150 nm? After all, taking into account all the diversity of nano-objects in nature, the boundaries of the nano-section of the size scale can and should be significantly blurred. And in general, in nature, drawing any precise boundaries is impossible - some objects smoothly flow into others, and this happens in a certain interval, and not at a point.

Before talking about boundaries, let's try to understand what physical meaning is contained in the concept of “nanoobject”, why does it need to be distinguished by a separate definition?

As noted above, only at the end of the 20th century did the understanding begin to appear (or rather, to become established in the minds) that the nanoscale range of the structure of matter still has its own characteristics, that at this level matter has other properties that do not manifest themselves in the macrocosm. It is very difficult to translate some English terms into Russian, but in English there is the term " bulk material”, which can be roughly translated as “a large amount of substance”, “bulk substance”, “continuous medium”. So here are some properties " bulk materials» as the size of its constituent particles decreases, they may begin to change when they reach a certain size. In this case, they say that there is a transition to the nanostate of the substance, nanomaterials.

And this happens because as the particle size decreases, the fraction of atoms located on their surface and their contribution to the properties of the object become significant and increase with a further decrease in size (Fig. 3).

But why does an increase in the fraction of surface atoms significantly affect the properties of particles?

The so-called surface phenomena have been known for a long time - these are surface tension, capillary phenomena, surface activity, wetting, adsorption, adhesion, etc. The entire set of these phenomena is due to the fact that the interaction forces between the particles that make up the body are not compensated on its surface (Fig. 4 ). In other words, atoms on the surface (crystal or liquid - it doesn’t matter) are in special conditions. For example, in crystals, the forces that force them to be at the nodes of the crystal lattice act on them only from below. Therefore, the properties of these “surface” atoms differ from the properties of the same atoms in the bulk.

Since the number of surface atoms in nanoobjects increases sharply (Fig. 3), their contribution to the properties of the nanoobject becomes decisive and grows with a further decrease in the size of the object. This is precisely one of the reasons for the manifestation of new properties at the nanolevel.

Another reason for the discussed change in properties is that at this dimensional level the action of the laws of quantum mechanics begins to manifest itself, i.e. the level of nanosizes is the level of transition, namely the transition, from the reign of classical mechanics to the reign of quantum mechanics. And as is well known, the most unpredictable things are precisely the transition states.

By the middle of the 20th century, people learned to work both with a mass of atoms and with a single atom.

Subsequently, it became obvious that a “little bunch of atoms” is something else, not quite similar to either a mass of atoms or an individual atom.

For the first time, scientists and technologists probably came face to face with this problem in semiconductor physics. In their quest for miniaturization, they reached particle sizes (several tens of nanometers or less) at which their optical and electronic properties began to differ sharply from those of particles of “regular” sizes. It was then that it finally became clear that the “nanoscale” scale is a special region, different from the region of existence of macroparticles or continuous media.

Therefore, in the above definitions of nanoscience and nanotechnology, the most significant point is that “real nano” begins with the emergence of new properties of substances associated with the transition to these scales and different from the properties of bulk materials. That is, the most significant and important quality of nanoparticles, their main difference from micro- and macroparticles, is the appearance of fundamentally new properties in them that do not appear at other sizes. We have already given literary examples, we use this technique again in order to clearly show and emphasize the differences between macro-, micro- and nano-objects.

Let's return to literary examples. The hero of Leskov's story, Levsha, is often mentioned as an “early” nanotechnologist. However, this is wrong. Lefty's main achievement is that he forged small nails [ “I worked smaller than these horseshoes: I forged the nails with which the horseshoes are filled, no small scope can take them there anymore"]. But these nails, although very small, remained nails and did not lose their main function - to hold the horseshoe. So the example with Lefty is an example of miniaturization (microminiaturization, if you like), i.e. reducing the size of an object without changing its functional and other properties.

But the already mentioned story by B. Zhitkov describes precisely the change in properties:

“I needed to draw out a thin wire - that is, the thickness that would be like hair for my living hands. I worked and looked through the microscope as micro hands held out copper. Thinner, thinner - there were still five more stretches left - and then the wire broke. It didn’t even tear - it crumbled like it was made of clay. It crumbled into fine sand. This is red copper, famous for its ductility.”

Note that in Wikipedia in an article about nanotechnology, an increase in the hardness of copper is given as one of the examples of changes in properties with decreasing size. (I wonder where B. Zhitkov learned about this in 1931?)

Nanobjects: quantum planes, threads and points. Carbon nanostructures

At the end of the 20th century, the existence of a certain region of particle sizes of matter - the region of nanosizes - finally became obvious. Physicists, clarifying the definition of nano-objects, claim that the upper limit of the nano-section of the size scale apparently coincides with the size of the manifestation of the so-called low-dimensional effects or the effect of dimensionality reduction.

Let's try to reverse-translate the last statement from the language of physicists into the common human language.

We live in a three-dimensional world. All real objects around us have certain dimensions in all three dimensions, or, as physicists say, they have dimension 3.

Let's conduct the following thought experiment. Let's choose three-dimensional, volume, a sample of some material, preferably a homogeneous crystal. Let it be a cube with an edge length of 1 cm. This sample has certain physical properties that do not depend on its size. Near the outer surface of our sample, the properties may differ from those in the bulk. However, the relative fraction of surface atoms is small, and therefore the contribution of surface changes in properties can be neglected (this requirement means in the language of physicists that the sample volume). Now let's divide the cube in half - two of its characteristic dimensions will remain the same, and one, let it be the height d, will decrease by 2 times. What will happen to the properties of the sample? They won't change. Let's repeat this experiment again and measure the property that interests us. We will get the same result. Repeating the experiment many times, we will finally reach a certain critical size d*, below which the property we measure will begin to depend on size d. Why? At d ≤ d* the share of the contribution of surface atoms to the properties becomes significant and will continue to increase with further decrease d.

Physicists say that when d ≤ d* in our sample there is quantum size effect in one dimension. For them, our sample is no longer three-dimensional (which for any ordinary person sounds absurd, because our d although it is small, it is not equal to zero!), it dimension reduced to two. A the sample itself is called quantum plane, or quantum well, by analogy with the term “potential well” often used in physics.

If in some sample d ≤ d* in two dimensions, it is called one-dimensional quantum object, or quantum thread, or quantum wire. U zero-dimensional objects, or quantum dots, d ≤ d* in all three dimensions.

Naturally, the critical size d* is not a constant value for different materials and even for one material can vary significantly depending on which of the properties we measured in our experiment, or, in other words, which of the critical dimensional characteristics of physical phenomena determines this property (free path of electrons phonons , de Broglie wavelength, diffusion length, penetration depth of an external electromagnetic field or acoustic waves, etc.).

However, it turns out that with all the variety of phenomena occurring in organic and inorganic materials in living and inanimate nature, the value d* lies approximately in the range 1–100 nm. Thus, a “nanoobject” (“nanostructure”, “nanoparticle”) is simply another variant of the term “quantum-dimensional structure”. This is an object that d ≤ d* in at least one dimension. These are particles of reduced dimensionality, particles with an increased proportion of surface atoms. This means that it is most logical to classify them according to the degree of dimensionality reduction: 2D - quantum planes, 1D - quantum threads, 0D - quantum dots.

The entire spectrum of reduced dimensions can be easily explained and, most importantly, observed experimentally using the example of carbon nanoparticles.

The discovery of carbon nanostructures was a very important milestone in the development of the nanoparticle concept.

Carbon is only the eleventh most abundant element in nature, but thanks to the unique ability of its atoms to combine with each other and form long molecules that include other elements as substituents, a huge variety of organic compounds, and even Life itself, arose. But even when combining only with itself, carbon is capable of generating a large set of different structures with very diverse properties - the so-called allotropic modifications. Diamond, for example, is a standard of transparency and hardness, a dielectric and a heat insulator. However, graphite is an ideal “absorber” of light, an ultra-soft material (in a certain direction), one of the best conductors of heat and electricity (in a plane perpendicular to the above direction). But both of these materials consist only of carbon atoms!

But all this is at the macro level. And the transition to the nanolevel opens up new unique properties of carbon. It turned out that the “love” of carbon atoms for each other is so great that they can, without the participation of other elements, form a whole set of nanostructures that differ from each other, including in size. These include fullerenes, graphene, nanotubes, nanocons, etc. (Fig. 5).

Let us note that carbon nanostructures can be called “true” nanoparticles, since in them, as can be clearly seen in Fig. 5, all their constituent atoms lie on the surface.

But let's return to the graphite itself. So, graphite is the most common and thermodynamically stable modification of elemental carbon with a three-dimensional crystal structure consisting of parallel atomic layers, each of which is a dense packing of hexagons (Fig. 6). At the vertices of any such hexagon is a carbon atom, and the sides of the hexagons graphically reflect strong covalent bonds between carbon atoms, the length of which is 0.142 nm. But the distance between the layers is quite large (0.334 nm), and therefore the connection between the layers is quite weak (in this case they talk about van der Waals interaction).

This crystal structure explains the peculiarities of the physical properties of graphite. Firstly, low hardness and the ability to easily separate into tiny flakes. So, for example, they write with pencil leads, the graphite flakes of which, peeling off, remain on the paper. Secondly, the already mentioned pronounced anisotropy of the physical properties of graphite and, above all, its electrical conductivity and thermal conductivity.

Any of the layers of the three-dimensional structure of graphite can be considered as a giant planar structure having a 2D dimension. This two-dimensional structure, built only from carbon atoms, is called “graphene.” Obtaining such a structure is “relatively” easy, at least in a thought experiment. Let's take a graphite pencil lead and start writing. Lead height d will decrease. If you have enough patience, then at some point the value d will be equal to d*, and we get a quantum plane (2D).

For a long time, the problem of stability of flat two-dimensional structures in a free state (without a substrate) in general and graphene in particular, as well as the electronic properties of graphene, were the subject of only theoretical studies. More recently, in 2004, a group of physicists led by A. Geim and K. Novoselov obtained the first samples of graphene, which revolutionized this field, since such two-dimensional structures turned out to be, in particular, capable of exhibiting amazing electronic properties, qualitatively different from anything previously observed. Therefore, today hundreds of experimental groups are studying the electronic properties of graphene.

If we roll up a graphene layer, monoatomic in thickness, into a cylinder so that the hexagonal network of carbon atoms is closed without seams, then we will “construct” single-walled carbon nanotube. Experimentally, it is possible to obtain single-walled nanotubes with a diameter from 0.43 to 5 nm. Characteristic features of the geometry of nanotubes are record values of specific surface area (on average ~1600 m 2 /g for single-walled tubes) and length-to-diameter ratio (100,000 and higher). Thus, nanotubes are 1D nanoobjects - quantum threads.

Multi-walled carbon nanotubes were also observed in the experiments (Fig. 7). They consist of coaxial cylinders inserted one into the other, the walls of which are at a distance (about 3.5 Å) close to the interplanar distance in graphite (0.334 nm). The number of walls can vary from 2 to 50.

If we place a piece of graphite in an atmosphere of an inert gas (helium or argon) and then illuminate it with a beam of a powerful pulsed laser or concentrated sunlight, we can evaporate the material of our graphite target (note that for this the target surface temperature must be at least 2700 ° C) . Under such conditions, a plasma is formed above the target surface, consisting of individual carbon atoms, which are entrained by the flow of cold gas, which leads to cooling of the plasma and the formation of carbon clusters. So, it turns out that under certain clustering conditions, carbon atoms are closed to form a framework spherical molecule C 60 with a dimension of 0D (i.e., a quantum dot), already shown in Fig. 1.

Such spontaneous formation of the C 60 molecule in carbon plasma was discovered in a joint experiment by G. Kroto, R. Curl and R. Smoley, carried out over ten days in September 1985. We will refer the inquisitive reader to the book by E. A. Katz “Fullerenes, carbon nanotubes and nanoclusters: A genealogy of forms and ideas,” describing in detail the fascinating history of this discovery and the events preceding it (with brief excursions into the history of science up to the Renaissance and even Antiquity), as well as explaining the motivation for what is strange at first glance (and only at first look) names of the new molecule - buckminsterfullerene - in honor of the architect R. Buckminster Fuller (see also the book [Piotrovsky, Kiselev, 2006]).

It was subsequently discovered that there is a whole family of carbon molecules - fullerenes - in the form of convex polyhedra, consisting only of hexagonal and pentagonal faces (Fig. 8).

It was the discovery of fullerenes that was a kind of magical “golden key” to the new world of nanometer structures made of pure carbon and caused an explosion of work in this area. To date, a large number of different carbon clusters have been discovered with a fantastic (in the literal sense of the word!) variety of structure and properties.

But let's return to nanomaterials.

Nanomaterials are materials whose structural units are nanoobjects (nanoparticles). Figuratively speaking, the building of nanomaterials is made of bricks-nanobjects. Therefore, it is most productive to classify nanomaterials according to the dimensions of both the nanomaterial sample itself (the external dimensions of the matrix) and the dimensions of its constituent nanoobjects. The most detailed classification of this kind is given in the work. The 36 classes of nanostructures presented in this work describe the entire variety of nanomaterials, some of which (like the above-mentioned fullerenes or carbon nanopeas) have already been successfully synthesized, and some are still awaiting their experimental implementation.

Why is it not that simple?

So, we can strictly define the concepts of “nanoscience”, “nanotechnology” and “nanomaterials” that interest us only if we understand what a “nanobject” is.

“Nanoobject,” in turn, has two definitions. The first, simpler (technological) one: these are objects (particles) with a characteristic size approximately 1–100 nanometers in at least one dimension. The second definition, more scientific, physical: an object with a reduced dimension (which d ≤ d* in at least one dimension).

As far as we know, there are no other definitions.

However, one cannot help but notice the fact that the scientific definition also has a serious drawback. Namely: in it, unlike the technological one, only the upper limit of nanosizes is determined. Should there be a lower limit? In our opinion, of course it should. The first reason for the existence of a lower limit directly follows from the physical essence of the scientific definition of a nanoobject, since most of the dimensionality reduction effects discussed above are effects of quantum limitation, or phenomena of a resonant nature. In other words, they are observed when the characteristic lengths of the effect and the dimensions of the object coincide, i.e., not only for d ≤ d*, which has already been discussed, but at the same time only if the size d exceeds a certain lower limit d** (d** ≤ d ≤ d*). It is obvious that the value d* may vary for different phenomena, but must exceed the size of atoms.

Let us illustrate this using the example of carbon compounds. Polycyclic aromatic hydrocarbons (PAHs) such as naphthalene, benzpyrene, chrysene, etc. are formally analogues of graphene. Moreover, the largest known PAH has the general formula C222H44 and contains 10 diagonal benzene rings. However, they do not have the amazing properties that graphene has and cannot be considered nanoparticles. The same applies to nanodiamonds: up to ~ 4–5 nm these are nanodiamonds, but close to these boundaries, and even going beyond them, higher diamandoids (analogs of adamantane, having condensed diamond cells as the basis of the structure) are suitable.

So: if in the limit the size of an object in all three dimensions is equal to the size of an atom, then, for example, a crystal composed of such 0-dimensional objects will not be a nanomaterial, but an ordinary atomic crystal. It is obvious. It is also obvious that the number of atoms in a nanoobject must still exceed one. If a nanoobject has all three values d less than d**, he ceases to be one. Such an object must be described in the language of describing individual atoms.

What if not all three sizes, but only one, for example? Does such an object remain a nanoobject? Of course yes. Such an object is, for example, the already mentioned graphene. The fact that the characteristic size of graphene in one dimension is equal to the diameter of a carbon atom does not deprive it of the properties of a nanomaterial. And these properties are absolutely unique. Conductivity, the Shubnikov-de Haas effect, and the quantum Hall effect in graphene films of atomic thickness were measured. Experiments have confirmed that graphene is a semiconductor with a zero band gap, while at the points of contact of the valence band and the conduction band, the energy spectrum of electrons and holes is linear as a function of the wave vector. Particles with zero effective mass, in particular photons, neutrinos, and relativistic particles, have this kind of spectrum. The difference between photons and massless carriers in graphene is that the latter are fermions and they are charged. Currently, there are no analogs for these massless charged Dirac fermions among the known elementary particles. Today, graphene is of great interest both for testing many theoretical assumptions from the fields of quantum electrodynamics and the theory of relativity, and for creating new nanoelectronic devices, in particular ballistic and single-electron transistors.

For our discussion, it is very important that the closest to the concept of a nanoobject is the dimensional region in which the so-called mesoscopic phenomena are realized. This is the minimum dimensional region for which it is reasonable to talk not about the properties of individual atoms or molecules, but about the properties of the material as a whole (for example, when determining the temperature, density or conductivity of the material). Mesoscopic dimensions fall exactly in the range of 1–100 nm. (The prefix “meso-” comes from the Greek word for “average”, intermediate - between atomic and macroscopic dimensions.)

Everyone knows that psychology deals with the behavior of individuals, and sociology deals with the behavior of large groups of people. So, relationships in a group of 3-4 people can be characterized by analogy as meso-phenomena. In the same way, as mentioned above, a small bunch of atoms is something that is neither like a “heap” of atoms nor like an individual atom.

Here we should note another important feature of the properties of nanoobjects. Despite the fact that, unlike graphene, carbon nanotubes and fullerenes are formally 1- and 0-dimensional objects, respectively, in essence this is not entirely true. Or rather, not like that at the same time. The fact is that a nanotube is the same 2D graphene monatomic layer rolled into a cylinder. A fullerene is a 2D carbon layer of monatomic thickness, closed over the surface of a sphere. That is, the properties of nanoobjects significantly depend not only on their size, but also on topological characteristics - simply put, on their shape.

So, the correct scientific definition of a nanoobject should be as follows:

is an object with at least one of the dimensions ≤ d*, and at least one of the dimensions exceeds d**. In other words, an object is large enough to have the macroproperties of a substance, but at the same time is characterized by a reduced dimension, i.e., in at least one of the dimensions it is small enough so that the values of these properties differ greatly from the corresponding properties of macroobjects from the same substance, significantly depended on the size and shape of the object. In this case, the exact values of dimensions d*and d** can vary not only from substance to substance, but also for different properties of the same substance.

The fact that these considerations are by no means scholastic (like “how many grains of sand does a heap begin with?”), but have a deep meaning for understanding the unity of science and the continuity of the world around us, becomes obvious if we turn our attention to nano-objects of organic origin.

Nanoobjects of organic nature - supramolecular structures

Above, we considered only inorganic, relatively homogeneous materials, and already there everything was not so simple. But on Earth there is a colossal amount of matter that is not only difficult, but cannot be called homogeneous. We are talking about biological structures and living matter in general.

The National Nanotechnology Initiative cites the following as one of the reasons for special interest in the nanoscale area:

Since the systemic organization of matter at the nanoscale is a key feature of biological systems, nanoscience and technology will make it possible to incorporate artificial components and assemblies into cells, thereby creating new structurally organized materials based on imitation of self-assembly methods in nature.

Let us now try to understand what meaning the concept of “nanosize” has in its application to biology, bearing in mind that when moving to this size range the properties must fundamentally or dramatically change. But first, let us remember that the nanoregion can be approached in two ways: “top-down” (fragmentation) or “bottom-up” (synthesis). So, the “bottom-up” movement for biology is nothing more than the formation of biologically active complexes from individual molecules.

Let us briefly consider the chemical bonds that determine the structure and shape of the molecule. The first and strongest is a covalent bond, characterized by a strict direction (only from one atom to another) and a certain length, which depends on the type of bond (single, double, triple, etc.). It is the covalent bonds between atoms that determine the “primary structure” of any molecule, that is, which atoms are connected to each other and in what order.

But there are other types of bonds that determine what is called the secondary structure of the molecule, its shape. This is primarily a hydrogen bond - a bond between a polar atom and a hydrogen atom. It is closest to a covalent bond, since it is also characterized by a certain length and direction. However, this bond is weak, its energy is an order of magnitude lower than the energy of a covalent bond. The remaining types of interactions are non-directional and are characterized not by the length of the bonds formed, but by the rate at which the bond energy decreases with increasing distance between the interacting atoms (long-range interaction). Ionic bonding is a long-range interaction; van der Waals interactions are short-range. So, if the distance between two particles increases by r times, then in the case of an ionic bond the attraction will decrease to 1/ r 2 from the initial value, in the case of the already mentioned van der Waals interaction - to 1/ r 3 or more (up to 1/ r 12). All these interactions can generally be defined as intermolecular interactions.

Let us now consider such a concept as a “biologically active molecule”. It should be recognized that the molecule of a substance in itself is of interest only to chemists and physicists. They are interested in its structure (“primary structure”), its shape (“secondary structure”), such macroscopic indicators as, for example, state of aggregation, solubility, melting and boiling points, etc., and microscopic (electronic effects and mutual the influence of atoms in a given molecule, spectral properties as a manifestation of these interactions). In other words, we are talking about studying the properties exhibited in principle by a single molecule. Let us recall that, by definition, a molecule is the smallest particle of a substance that carries its chemical properties.

From the point of view of biology, an “isolated” molecule (in this case it does not matter whether it is one molecule or a number of identical molecules) is not capable of exhibiting any biological properties. This thesis sounds quite paradoxical, but let’s try to substantiate it.

Let's consider this using the example of enzymes - protein molecules that are biochemical catalysts. For example, the enzyme hemoglobin, which ensures the transfer of oxygen to tissues, consists of four protein molecules (subunits) and one so-called prosthetic group - heme, containing an iron atom non-covalently bound to the protein subunits of hemoglobin.

The main, or rather the determining contribution to the interaction of protein subunits and heme, the interaction leading to the formation and stability of the supramolecular complex, which is called hemoglobin, is made by forces sometimes called hydrophobic interactions, but representing the forces of intermolecular interaction. The bonds formed by these forces are much weaker than covalent ones. But in complementary interaction, when two surfaces come very close to each other, the number of these weak bonds is large, and therefore the total interaction energy of the molecules is quite high and the resulting complex is quite stable. But until these bonds are formed between the four subunits, until a prosthetic group (gem) is added (again due to non-covalent bonds), under no circumstances can individual parts of hemoglobin bind oxygen, much less transport it anywhere. And, therefore, they do not have this biological activity. (The same reasoning can be extended to all enzymes in general.)

Moreover, the process of catalysis itself implies the formation during the reaction of a complex of at least two components - the catalyst itself and a molecule (molecules), called substrate(s), undergoing some kind of chemical transformation under the influence of the catalyst. In other words, a complex of at least two molecules must be formed, i.e., a supramolecular (supramolecular) complex.

The idea of complementary interaction was first proposed by E. Fischer to explain the interaction of drugs with their target in the body and was called the “key to lock” interaction. Although drugs (and other biological substances) are not in all cases enzymes, they are also capable of causing any biological effect only after interaction with the corresponding biological target. And such an interaction, again, is nothing more than the formation of a supramolecular complex.

Consequently, the manifestation of fundamentally new properties by “ordinary” molecules (in the case under consideration, biological activity) is associated with the formation of supramolecular (supramolecular) complexes with other molecules due to the forces of intermolecular interaction. This is exactly how most enzymes and systems in the body are structured (receptors, membranes, etc.), including such complex structures that are sometimes called biological “machines” (ribosomes, ATPase, etc.). And this happens precisely at the level nanometer sizes - from one to several tens of nanometers.

With further complexity and increase in size (more than 100 nm), i.e., when moving to another dimensional level (microlevel), much more complex systems arise, capable of not only independent existence and interaction (in particular, energy exchange) with the environment their environment, but also to self-reproduction. That is, the properties of the entire system again change - it becomes so complex that it is already capable of self-reproduction, and what we call living structures arises.

Many thinkers have repeatedly tried to define Life. Without going into philosophical discussions, we note that, in our opinion, life is the existence of self-reproducing structures, and living structures begin with a single cell. Life is a micro- and macroscopic phenomenon, but the main processes that ensure the functioning of living systems occur at the nanoscale level.

The functioning of a living cell as an integrated self-regulating device with a pronounced structural hierarchy is ensured by miniaturization at the nanoscale level. It is obvious that miniaturization at the nanoscale level is a fundamental attribute of biochemistry, and therefore the evolution of life consists of the emergence and integration of various forms of nanostructured objects. It is the nanoscale section of the structural hierarchy, limited in size both above and below (!), that is critical for the appearance and ability to exist of cells. That is, it is the nanoscale level that represents the transition from the molecular level to the Living level.

However, due to the fact that miniaturization at the nanoscale level is a fundamental attribute of biochemistry, it is still impossible to consider any biochemical manipulations as nanotechnological - nanotechnology still involves the design, and not the banal use of molecules and particles.

Conclusion

At the beginning of the article, we already tried to somehow classify the objects of various natural sciences according to the principle of the characteristic sizes of the objects they study. Let's return to this again and, applying this classification, we find that atomic physics, which studies interactions within an atom, is subangstrom (femto- and pico-) sizes.

"Ordinary" inorganic and organic chemistry are angstrom sizes, the level of individual molecules or bonds within crystals of inorganic substances. But biochemistry is the level of nanosize, the level of existence and functioning of supramolecular structures stabilized by non-covalent intermolecular forces.

But the biochemical structures are still relatively simple, and they can function relatively independently ( in vitro, if you like). Further complication, the formation of complex ensembles by supramolecular structures - this is a transition to self-reproducing structures, a transition to the Living. And here, at the level of cells, these are micro-sizes, and at the level of organisms, these are macro-sizes. This is already biology and physiology.

The nanolevel is a transitional region from the molecular level, which forms the basis of the existence of all living things, consisting of molecules, to the level of the Living, the level of existence of self-reproducing structures, and nanoparticles, which are supramolecular structures stabilized by the forces of intermolecular interaction, represent a transitional form from individual molecules to complex ones functional systems. This can be reflected in a diagram that emphasizes, in particular, the continuity of Nature (Fig. 9). In the scheme, the nanoscale world is located between the atomic-molecular world and the world of the Living, consisting of the same atoms and molecules, but organized into complex self-reproducing structures, and the transition from one world to another is determined not only (and not so much) by the size of the structures, but by their complexity . Nature has long invented and uses supramolecular structures in living systems. We are far from always able to understand, much less repeat, what Nature does easily and naturally. But you can’t expect favors from her, you have to learn from her.

Literature:

1) Vul A.Ya., Sokolov V.I. Nanocarbon research in Russia: from fullerenes to nanotubes and nanodiamonds / Russian Nanotechnologies, 2007. Vol. 3 (3–4).

2) Kats E.A. Fullerenes, carbon nanotubes and nanoclusters: a genealogy of forms and ideas. - M.: LKI, 2008.

3) Ostwald V. The world of bypassed quantities. - M.: Publishing house of the “Mir” partnership, 1923.

4) Piotrovsky L.B., Kiselev O.I. Fullerenes in biology. - Rostock, St. Petersburg, 2006.

5) Tkachuk V.A. Nanotechnologies and medicine // Russian nanotechnologies, 2009. T. 4 (7–8).

6) Khobza P., Zahradnik R. Intermolecular complexes. - M.: Mir, 1989.

7) Mann S. Life as a nanoscale phenomenon. Angew. Chem. Int. Ed. 2008, 47, 5306–5320.

8) Pokropivny V.V., Skorokhod V.V. New dimensionality classifications of nanostructures // Physica E, 2008, v. 40, p. 2521–2525.

Nano - 10–9, pico - 10–12, femto - 10–15.

Moreover, not only to see, but also to touch. “But he said to them: unless I see in His hands the marks of the nails, and put my finger into the marks of the nails, and put my hand into His side, I will not believe” [Gospel of John, chapter 20, verse 24].

For example, he spoke about atoms back in 430 BC. e. Democritus Dalton then argued in 1805 that: 1) elements are made of atoms, 2) the atoms of one element are identical and different from the atoms of another element, and 3) atoms cannot be destroyed in a chemical reaction. But only from the end of the 19th century theories of the structure of the atom began to develop, which caused a revolution in physics.

The concept of “nanotechnology” was introduced into use in 1974 by the Japanese Norio Taniguchi. For a long time, the term was not widely used among specialists working in related fields, since Taniguchi used the concept of “nano” only to denote the precision of surface processing, for example, in technologies that make it possible to control the surface roughness of materials at a level of less than a micrometer, etc.

The concepts of “fullerenes”, “carbon nanotubes” and “graphene” will be discussed in detail in the second part of the article.

An experimental illustration of this statement is the recently published development of technological methods for producing graphene sheets by “chemical cutting” and “unfolding” of carbon nanotubes.

The word “microscopic” is used here only because these properties were called that way earlier, although in this case we are talking about the properties exhibited by molecules and atoms, i.e., the pico-size range.

Which, in particular, led to the emergence of the point of view that life is a phenomenon of nanometer dimensions [ Mann, 2008], which, in our opinion, is not entirely true.